ISO/IEC 42005:2025 – Complete Guide

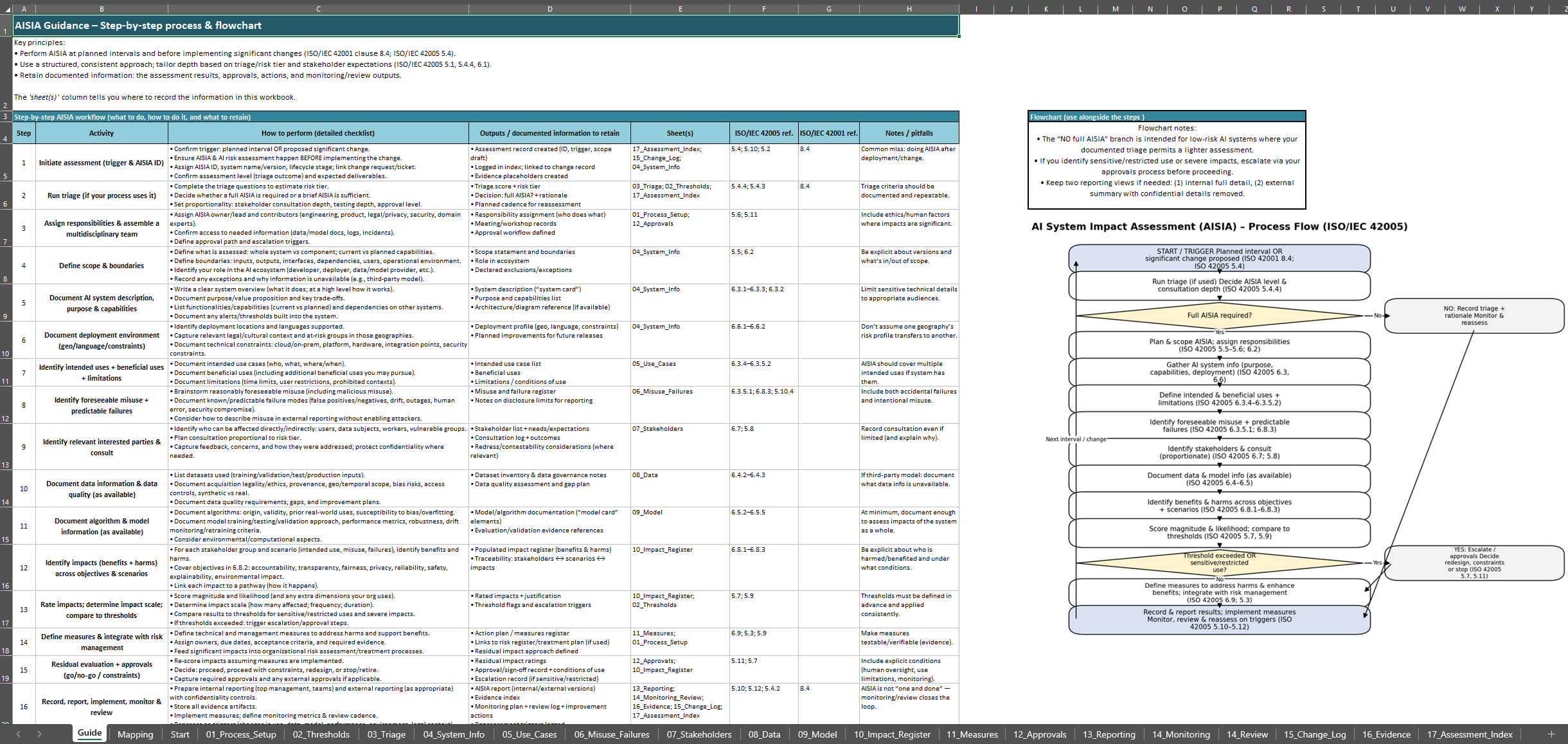

ISO/IEC 42005:2025 is a guidance standard (not a certification standard) that helps organizations conduct AI system impact assessments in a structured, repeatable manner.

What is ISO/IEC 42005?

ISO/IEC 42005:2025 focuses specifically on evaluating how a particular AI system – across its design, development, deployment, and use – can affect people and society, including any unintended or reasonably foreseeable misuse of the AI.

In practice, this means looking beyond technical performance metrics and considering broader implications: Who might be impacted by the AI? How might the AI’s decisions or actions influence individuals or communities?

Why is ISO/IEC 42005 important?

As AI technologies reshape our daily lives and business operations, there is a growing imperative to ensure AI is deployed responsibly and ethically.

ISO 42005 plays a crucial role in this by providing a common framework to assess and address AI’s impacts before and after these systems are put into the world.

ISO 42005 Clause 5

Developing and implementing an AI system impact assessment process.

5.1 General

5.1 General — Establish your AI impact assessment process

ISO/IEC 42005 expects a structured, consistent way to perform and document AI system impact assessments, tailored to your organization.

Your approach should reflect internal factors (context, governance, obligations, intended use, risk appetite) and external factors (laws and regulator guidance, cultural norms, incentives and consequences, market trends).

5.2 Documenting the process

Document your AI system impact assessment process, including methodology, roles, inputs, outputs, and decision-making workflow.

5.3 Integration with other organizational management processes

Align AI impact assessments with existing governance, risk management, and compliance activities to ensure efficiency and consistency.

5.4 Timing of AI system impact assessment

Define when assessments are conducted, including during design, pre-deployment, and after major system changes.

5.5 Scope of the AI system impact assessment

Clarify which AI systems, components, or uses are covered and which stakeholders may be affected.

5.6 Allocating responsibilities

Assign clear roles and accountability for performing, reviewing, and approving assessments across multidisciplinary teams.

5.7 Establishing thresholds for sensitive uses, restricted uses and impact scales

Identify high-risk or sensitive AI applications and define thresholds that trigger in-depth assessment and approval.

5.8 Performing the AI system impact assessment

Carry out assessments systematically, including identification of impacts, potential harms, and benefits.

5.9 Analysing the results of the AI system impact assessment

Evaluate the severity and likelihood of identified impacts to guide mitigation and decision-making.

5.10 Recording and reporting

Document findings, mitigation measures, and approvals for transparency and audit readiness.

5.11 Approval process

Define approval workflows and authority levels to ensure proper oversight and accountability for impact assessment outcomes.

5.12 Monitoring and review

Implement ongoing monitoring and periodic review to keep assessments current as AI systems or context evolve.

Documenting the AI system impact assessment

Maintain comprehensive documentation of the entire assessment process, findings, and decisions.

ISO 42005 puts heavy emphasis on record-keeping, every assessment should produce a report or record that details how the assessment was conducted, what impacts were identified (positive and negative), what mitigation measures or design changes were decided, and who approved them.

6.1 General

Follow a structured approach to document AI system impact assessments, ensuring clarity and consistency.

6.2 Scope of the AI system impact assessment

Define what is included in the assessment and which AI systems or uses are covered.

6.3 AI system information

Document comprehensive information on the AI system, including description, functionalities, purpose, intended uses, and unintended uses.

6.4 Data information and quality

Record the sources, quality, and documentation of data used in AI system development and operation.

6.5 Algorithm and model information

Document algorithm and model details, including development, deployment, and versioning information.

6.6 Deployment environment

Record the operational environment of the AI system, including geography, languages, and complexity constraints.

6.7 Relevant interested parties

Identify stakeholders affected by the AI system, including directly and indirectly impacted parties

6.8 Actual and reasonably foreseeable impacts

Document potential and actual harms, benefits, and system failures, including reasonably foreseeable misuse.

6.9 Measures to address harms and benefits

Record mitigation actions, enhancements, and plans to maximize positive impacts and reduce negative effects.

Annex A: Guidance for use with ISO/IEC 42001

This annex provides practical guidance on how to integrate ISO 42005’s impact assessment process into an AI management system based on ISO/IEC 42001. It maps the impact assessment requirements to the corresponding clauses of ISO 42001, helping organizations that already follow ISO 42001 avoid duplication of effort.

Annex A shows how to embed AI impact assessments within the broader AI governance processes defined by ISO 42001, so that doing an impact assessment naturally satisfies parts of the management system and vice versa.

Annex B: Guidance for use with ISO/IEC 23894

Annex B explains the relationship between ISO 42005 and ISO/IEC 23894, which is the standard for AI risk management. It clarifies how the specific activity of an AI impact assessment feeds into the overall risk management lifecycle for AI systems.

For organizations using ISO 23894’s guidance, Annex B helps distinguish general risk management steps from the impact assessment-specific steps, ensuring that AI’s societal and human impacts are properly considered as part of risk management and not overlooked.

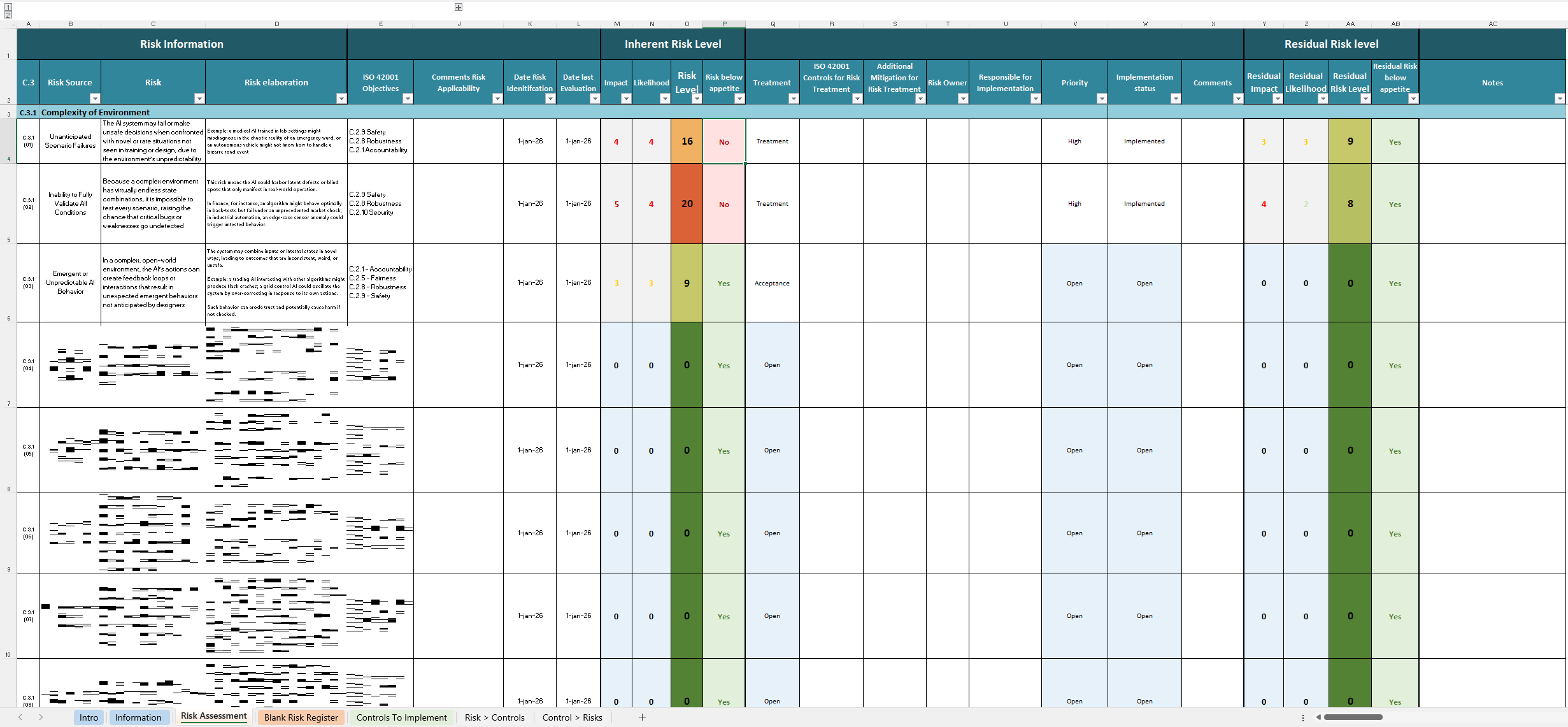

Annex C: Harms and Benefits Taxonomy

This annex offers a structured taxonomy (classification) of potential harms and benefits of AI systems. It essentially provides a template for example framework that organizations can use to systematically categorize the impacts they identify.

Your organization can check that it has thought through different categories of impact (e.g. harms related to bias, privacy, safety, economic effects, as well as benefits like efficiency gains, improved access to services, etc.). Annex C is valuable for showing that the assessment team considered a comprehensive range of possible impacts and didn’t miss major areas.

Annex D: Aligning AI Impact Assessments with Other Assessments

Coordination with other assessments is important. Annex D provides guidance on how to align and coordinate AI system impact assessments with other existing organizational assessment processes, such as privacy, ethics, or environmental impact assessments.

It gives examples or best practices on scheduling combined assessments, sharing information between teams, and building an integrated reporting structure.

Annex E: Example AI Impact Assessment Template

Annex E includes a detailed example on how to structure a template that organizations can use as a starting point for documenting an AI system impact assessment. The structured template needs to cover all the key elements required by ISO 42005 – for instance, sections to fill in the system description, identified impacts (with their likelihood and severity), mitigation measures, approvals, etc.

This annex is extremely useful for practitioners, as it turns the abstract requirements into a concrete format that teams can fill out. Using a template can ensure consistency and completeness across different assessments done by an organization.

The evolution of AI Governance and Ethics

Formalizing the process of AI system impact assessment

ISO/IEC 42005:2025 represents a significant step in the evolution of AI governance and ethics.

Formalizing the process of AI system impact assessment helps your organization move from abstract principles to concrete actions in managing how AI affects society.

This structured approach and with defining when and how to assess impacts, involving the right stakeholders, documenting decisions, and continuously monitoring outcomes will ensure that AI deployments are accompanied by appropriate safeguards and accountability measures.

ISO 42005 embeds the idea that “just because we can build it, we must also ask what its impact will be.”

Proactive commitment to responsible AI

For business and technology leaders, adopting ISO 42005 can demonstrate a proactive commitment to responsible AI.

It provides confidence to regulators, customers, and the public that the organization is not only focused on AI performance.

When used in conjunction with ISO 42001 (AI management systems) and related standards like ISO 38507 (AI governance) and ISO 23894 (AI risk management), ISO 42005 becomes part of a holistic framework for trustworthy AI.

While following ISO 42005 may require an investment in time and cross-disciplinary effort, it ultimately facilitates sustainable innovation.

ISO 42005 guides your organization with creating a culture of anticipating and managing impact, and enables AI systems that are not only effective, but also aligned with societal values and worthy of public trust.